UVC - Use UVC to send image

Preparation

- Ameba x 1

- Logitech C170 web cam x 1

- Micro USB OTG adapter x 1

Example

First open the sample code, “File” -> “Examples” -> “AmebaUVC” -> “uvc_jpeg_capture”

Modify the sample code for the following information:

- The ssid/password for WiFi connection.

- The IP of the receiver. In linux OS, you can type ifconfig to get correct IP.

The wiring is the same as the previous UVC example.

Next, type in the linux computer: nc -l 5001 > my_jpeg_file.jpeg

This command will wait until socket close or some problem occurs.

In this example, we use Ameba to send an image to receiver and close the socket.

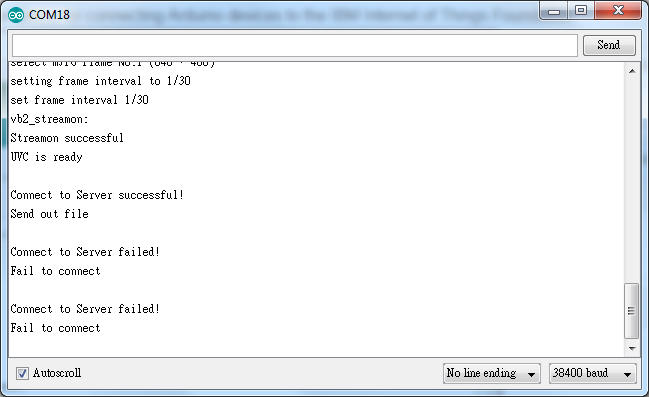

Compile and upload the sample to Ameba and press reset button.

After the connection is established, Ameba sends an image to receiver. Then the “Fail to connect” message appears, this means the example ends successfully.

At the same time, the nc command at the linux side has ended. In the working directory of the linux terminal, you can find the received image “my_jpeg_file”. If you type the nc command again, you will receive the file again, since Ameba tried to send the image every second.

Code Reference

UVC.begin(UVC_MJPEG, 640, 480, 30, 0, JPEG_CAPTURE);In loop(), save the JPEG to predefined jpegbuf:int len = UVC.getJPEG(jpegbuf);

Then sends the jpegbuf via TCP.